The other night I attended a press dinner hosted by an enterprise company called Box. Other guests included leaders of his two data-oriented companies: Datadog and MongoDB. Typically, executives at such soirées are on their best behavior, especially when the discussion is on the record, as it was in this case. So I was surprised by my interaction with Box CEO Aaron Levie. He said he had a hard time eating dessert because he was flying to Washington DC that night. He was heading to a special interest event called TechNet Day. There, Silicon Valley will speed date with dozens of members of Congress and shape how the (uninvited) public will have to respond. And what did he want from the law? “As little as possible,” Levi replied. “I am solely responsible for stopping the government.”

He was joking about it. In a sense. He went on to say that it makes sense to regulate obvious abuses of AI like deepfakes, but also to force companies to submit large-scale language models to government-sanctioned AI police, or for chatbots. Scan and hack into bias and real-world infrastructure. He cited Europe, which has already introduced restrictions on AI, as an example. do not have to do. “What Europe is doing is very dangerous,” he said. “There is a view in the EU that if you regulate first, you create an atmosphere of innovation,” Levy said. “That has been empirically proven wrong.”

Levie's comments run counter to the standard position among Silicon Valley AI elites like Sam Altman. “Yes, please regulate!” they say. But Levy points out that consensus breaks down when it comes to what exactly the law should specify. “As a tech industry, we don't know what we actually want. A dinner with five or more AI people where we can come to a single agreement on how to regulate AI. I’ve never been to a meeting,” Levy said. It doesn't matter. Levy believes the dream of significant enforcement of the AI bill is hopeless. “The good news is that the US will never work together in this way. There will never be an AI law in the US.”

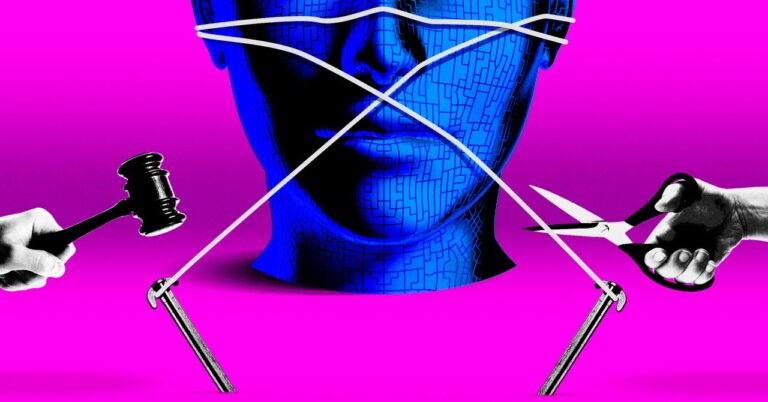

Levi is known for his irreverent talkativeness. But in this case, he's simply more outspoken than many of his colleagues, whose “regulate us” position is a kind of sophisticated rope-a-dope. TechNet Day's only public event, at least as far as I was aware, was his livestreamed panel discussion on AI innovation. Participants included Google's global president Kent Walker and Michael Kratsios, the most recent US chief technology officer and now his CEO. Scale AI. The feeling among these panelists was that the government should focus on preserving U.S. leadership in this area. They acknowledged that the technology had risks, but argued that existing laws largely covered the potential complications.

Google's Walker seemed particularly wary of some states developing their own AI laws. “Right now, there are 53 different AI bills pending in Congress in California alone,” he said, and he’s not bragging. Mr. Walker, of course, knows that it is almost impossible for this Congress to survive the government itself, and the prospects for both houses of Congress to juggle this hot potato in an election year are far from clear. That's as far as rehiring 8 people.

A bill has been introduced in the U.S. Congress. And bills keep coming, some perhaps less meaningful than others. This week, California Democratic Rep. Adam Schiff introduced a bill called the Generated AI Copyright Disclosure Act of 2024. The bill would require large-scale language models to submit to the Copyright Office a “sufficiently detailed summary of the works used in training.” data set. ” It is not clear what “sufficiently detailed” means. Is it safe to say, “We just scraped the open web”? Schiff's staff explained to me that they are adopting measures found in the EU's AI bill.